- IF's newsletter

- Posts

- #17 Good governance, designed for the wrong moment

#17 Good governance, designed for the wrong moment

If the human-AI relationship isn't explicitly designed, no governance framework will fix it.

3 in 4 companies plan to deploy agentic AI within two years, but 1 in 5 report having a mature model for governing agents (Deloitte, 2026).

This gap isn't just about a lack of speed. It's about governance being designed for the wrong moment.

I joined a panel at the Digital Regulation Cooperation Forum (DRCF)'s Responsible AI Forum to speak about what “good” AI governance is, alongside the FCA, Best Practice AI and TechUK.

My argument was that most AI governance thinking focuses on what happens before deployment. Model cards, audit trails, and accountability frameworks all focus on how an AI system works. But that’s not where trust breaks. Trust breaks in the experience, in the moment of interaction between a person and an AI system.

Good AI governance should help earn trust - and that means designing the relationships between the AI system and the human using it. It means making the decisions and trade-offs on how uncertainty is communicated, when humans should intervene, who decides what and when, and whether feedback actually changes the system.

A good example for this? Citizens Advice’s Caddy! Citizens Advice introduced Caddy to reduce response time to client queries. In building it, they explicitly designed the human-AI partnership: when a human is in the loop, who decides what, and how the handoff works. The result: advisors are now twice as likely to report confidence in the answers they give.

That outcome didn't come from the latest, shiny model… it came from the design of the human/model relationship around it.

The organisations getting AI adoption right are the ones explicitly designing the relationship between the system and the person using it. That happens at the interface: in the feedback loops, in whether someone knows their input will matter, in meaningful transparency at the point of use.

This isn't just a question for governance, it's a question for design.

"AI governance: What does 'good' look like?" at DRCF's Responsible AI Forum

For 10 years, IF has been helping large organisations build customer-facing services that scale safely, earn trust from the start and deliver long-term impact. We prototype, test, and launch AI products and services that people believe in and want to adopt, while helping organisations change the way they work in the AI age.

Learn more or talk to us.

What we’ve been up to in the studio

Opening up the untapped potential of public data

Activating public data is a government priority: when re-used commercially, it drives economic growth and fuels innovative products and services.

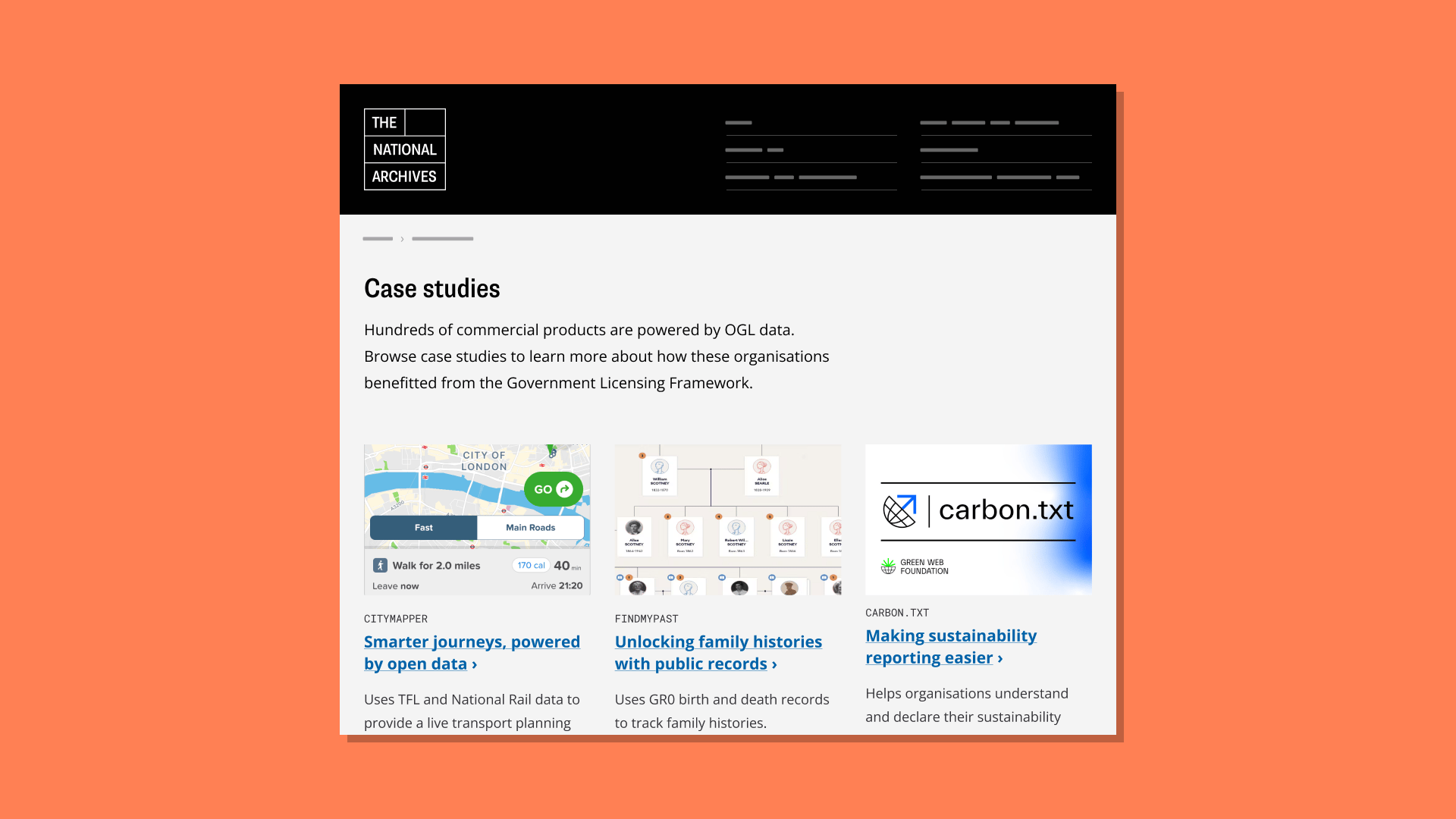

We’ve partnered with The National Archives to improve how people publish and re-use public sector information through the Government Licensing Framework and Open Government Licence. Awareness of the framework is low, and its policy-centred design makes it hard to navigate for experts and non-experts alike.

By learning from people actually publishing and re-using data, we’re designing recommendations that make the framework’s value clear, easier to understand, and guide people to use it confidently. That way, more people can benefit.

The National Archives: A prototype designed to make the government licensing framework easier to understand

What we’ve been reading

The City of Boston is showing the way in building digital public infrastructure by investing in its MCP infrastructure. As its Chief Innovation Officer, Santiago Garces, puts it: “If we want a future not locked into a handful of vendors, one where constituents’ privacy is protected and online interactions are secure and reliable…this is the moment to act.” Read the piece in Fast Company.

We enjoyed this campaign by the Norwegian Consumer Council on demanding a better digital future, including a 100-page report (but very pleasant to read, I promise!), a campaign film and open letters to the Norwegian authorities and European policymakers.

The “accidental” exposure of hundreds of thousands of UK Biobank records shows the recurring problem that even “anonymised” data is never truly anonymous. Small details like a birth date or hospital visit can be enough to identify someone and reveal sensitive information about them. The UK Biobank is a world-class resource for health research. But without proper guardrails, it will continue to expose participants' personal details and erode trust across the entire research ecosystem. Read the Guardian story.

This article by James Darling tickled our AI and data team's imagination on how to design a data-enabled service by starting without data. What would happen if we used this approach to define what an AI model would do, so we can build an experience prototype while we’re collecting the right data?

Final thought

As winter comes to an end, I invite you to leave the old behind and embrace the new season lightly. Digital Clean Up day just passed, but you’re still in time to make your digital presence a bit less heavy! What will you leave in the past?

— Valeria and the IF Team

This month’s edition was written by Valeria Adani, Partner at IF, currently working with cities across the world on AI and data-driven innovation.